Let’s Try AI was conceived as a learning space where students could engage with generative artificial intelligence not only as users, but as critical thinkers. The original idea was to move beyond tutorials or productivity tips and instead create a resource that encourages curiosity, questioning, and reflection. The project treats AI as a socio-technical system—one that shapes how knowledge is produced, evaluated, and valued in academic contexts.

Rather than presenting AI as neutral or inevitable, the site invites students to ask meaningful questions: How does this technology work? What assumptions are built into it? Who benefits from its use, and who might be excluded? In this way, Let’s Try AI positions AI literacy as a form of academic and civic literacy.

Motivation and context

The motivation for this project emerged from the rapid expansion of generative AI tools within higher education and the uncertainty this created for students and instructors alike. Many learners began experimenting with tools like ChatGPT without clear guidance, while instructors grappled with how to respond pedagogically rather than reactively.

Let’s Try AI responds to this moment by offering an alternative to both prohibition and uncritical adoption. It provides a structured space where students can learn about AI, practice using it thoughtfully, and reflect on its implications for learning, authorship, integrity, and equity. The site reinforces the importance of checking course-specific policies and instructor expectations, recognizing that responsible AI use is always contextual.

My role in the project

I had the pleasure of creating this resource as part of my role as a Learning Technologist at Thompson Rivers University. The site was developed using TRUbox, allowing for an open, accessible, and student-focused design that aligns with institutional values around learning, inclusion, and academic integrity.

My work on this project brought together instructional design, critical digital pedagogy, and emerging technology practices. From conceptual framing to content design and tool integration, the goal was to create a resource that feels supportive rather than prescriptive, and exploratory rather than authoritative. It wasn’t a solo effort; the vision of department head Brian Lamb and the rest of the team was very helpful.

Purpose and intended audience

The primary audience for Let’s Try AI is undergraduate and graduate students at Thompson Rivers University who are encountering generative AI in their academic work. The resource is particularly relevant for students who may feel unsure about what is allowed, what is ethical, or how to use AI without compromising their learning.

At the same time, the open nature of the site makes it useful for instructors, librarians, educational developers, and staff interested in AI literacy. Its modular structure allows different audiences to engage with the content in ways that align with their roles and responsibilities.

Learning structure: Learn, Practice, Create

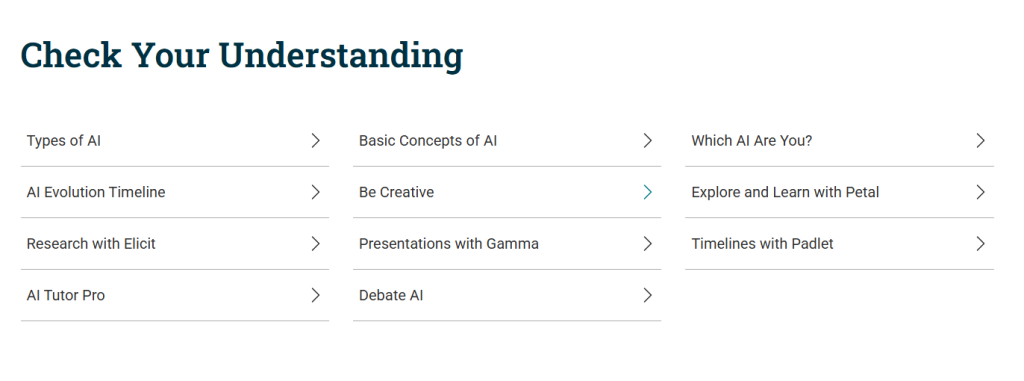

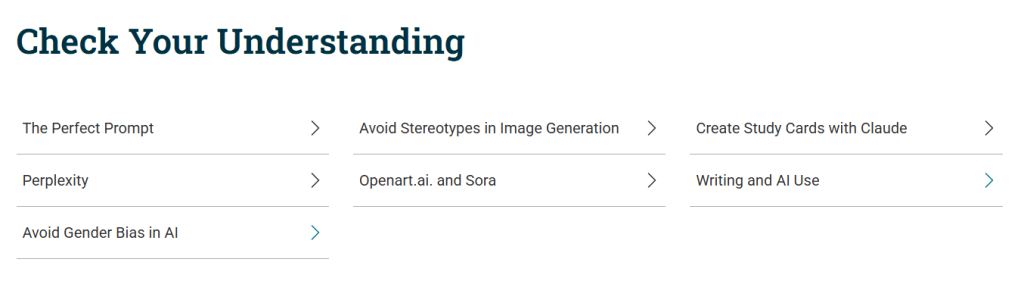

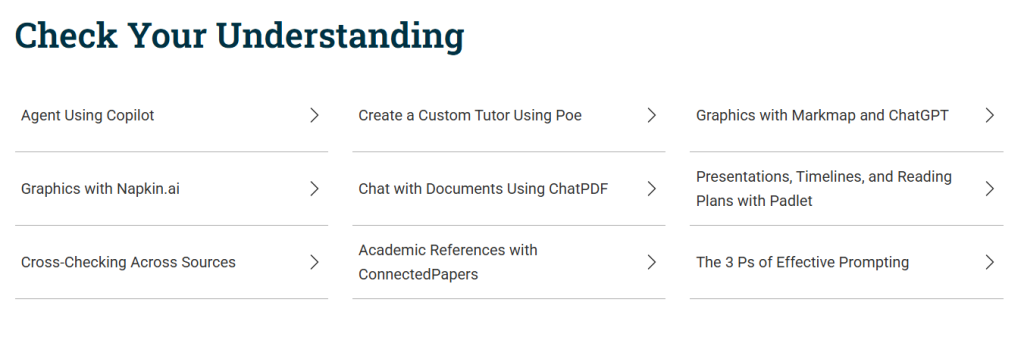

The website is organized around three interconnected sections that reflect a progression from understanding to application to reflection.

Learn introduces the foundations of generative AI, explaining how these systems are trained, how data is managed, and why AI-generated outputs can be incomplete, biased, or incorrect. This section emphasizes conceptual understanding as a prerequisite for responsible use.

Practice offers guided opportunities to experiment with GenAI tools while following clear ethical and pedagogical principles. Practice is framed as a way to test assumptions, evaluate outputs critically, and develop discernment, rather than as a shortcut for academic work.

Create focuses on strategies for using AI in creative and reflective ways that support learning goals. This includes idea generation, organization, and exploration, always within the boundaries defined by course policies and instructor guidance.

Pedagogical approach

Let’s Try AI is grounded in the belief that learning about AI is a situated, social, and reflective process. The resource encourages students to remain active agents in their learning, responsible for evaluating information, making decisions, and acknowledging limitations.

The site also models transparency by explicitly discussing risks related to bias, privacy, and overreliance on automated systems. In doing so, it reinforces that ethical AI use is not just a technical skill, but a habit of critical thinking.

Generative AI tools explored in the project

To support hands-on learning and critical comparison, the site references and demonstrates a wide range of generative AI tools, including:

- ChatGPT

- Copilot

- Claude

- Perplexity

- Poe

- ChatPDF

- Elicit

- Connected Papers

- Petal

- Napkin

- Markmap

- Padlet

- Gamma

- Leonardo

- Sora

These tools are used for educational, illustrative, and reflective purposes. They are not presented as authoritative sources, but as examples through which students can examine differences in output, bias, transparency, and suitability for academic tasks.

Attribution and open collaboration

Let’s Try AI incorporates and clearly attributes selected materials from the University of Toronto’s Coursework and GenAI: A Practical Guide for Students. This practice reflects the project’s commitment to openness, ethical reuse, and collaborative knowledge-building across institutions.

Attribution is treated not as a formality, but as a pedagogical act that reinforces respect for intellectual labour and shared educational efforts.

Technology as support, not authority

While technology plays an important role in this project, it is intentionally framed as supportive rather than directive. AI tools are used to enhance accessibility, illustrate concepts, and prompt reflection, while human judgment, academic values, and pedagogical intent remain central.

The site consistently reinforces that students are responsible for their work, their choices, and their learning.

A space for shared learning and dialogue

Let’s Try AI functions as a shared learning space that supports conversation among students, instructors, librarians, and educational developers. Rather than offering fixed rules or universal answers, it provides frameworks, guiding questions, and examples that help learners develop their own criteria for engaging with generative AI in thoughtful and responsible ways.

In this sense, the project contributes to a broader culture of ethical, reflective, and community-oriented engagement with emerging technologies in higher education.